You detect AI cheating in technical interviews by combining written environment rules, follow-ups that change the problem mid-session, and optional telemetry that never auto-disqualifies without a human review.

That is how you surface declared in-session assistance, device rules, and the dev-environment bar (editors, extensions, AI in the IDE vs a browser), on top of the exercise you set in the stack you care about (language, frameworks, infra you name for the role). It is also how you see whether reasoning survives when the problem shifts mid-session. It does not answer a separate question: who is in the session, and whether presence is authentic.

CTOs, engineering managers, and hiring managers who run remote technical interviews and take-home assessments for software teams are often accountable for both tracks at once. For identity, deepfake, and proxy risk, you add short liveness or motion checks where legal, correlated live and async signals, and no reliance on a single artifact, because screen share does not prove integrity.

You have already watched a too-smooth answer and wondered whether you are evaluating Claude, ChatGPT, Copilot, a second laptop, or a synthetic face, without written rules that say what is fair game at this stage.

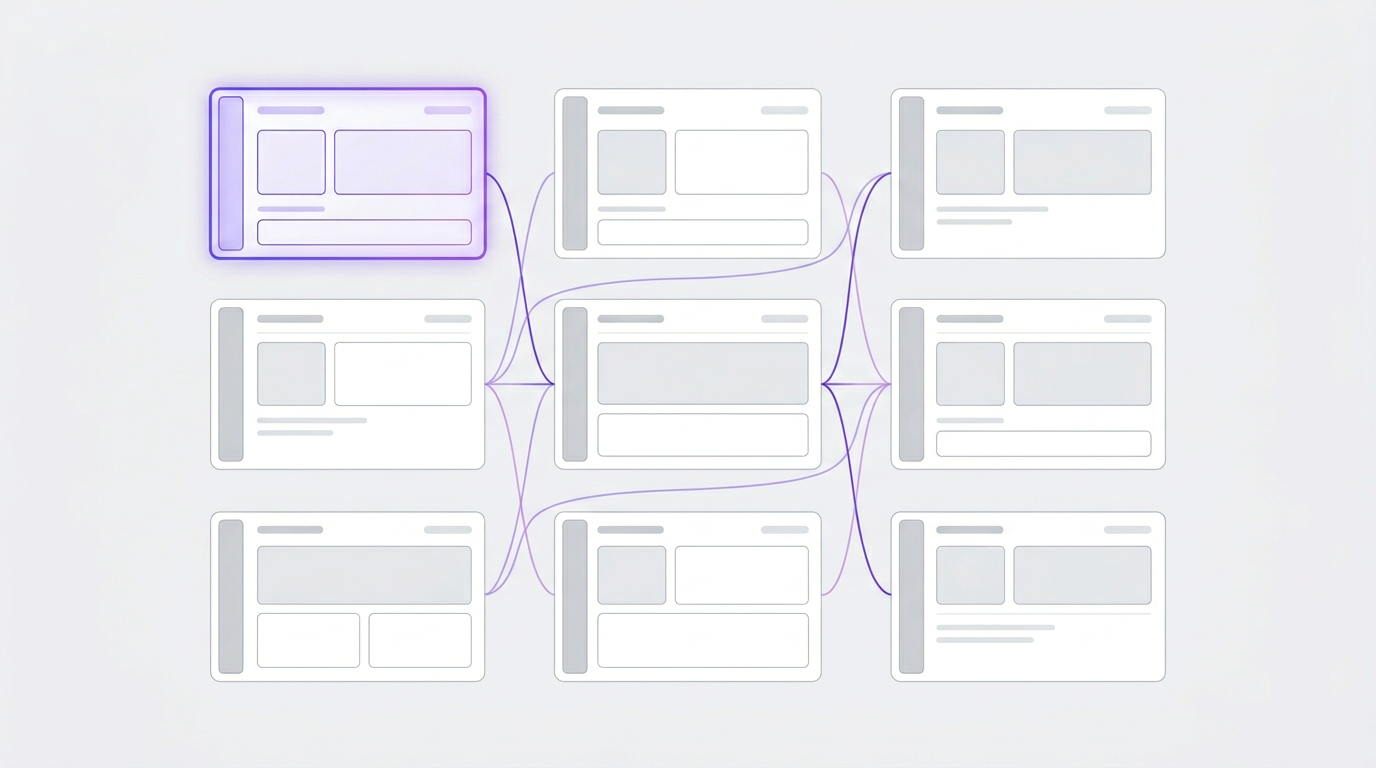

From camera to code: how we stage the assessment

Before and on camera (presence), in the live round (environment and assistance), and on paper (written rules for async and take-homes). Same bar, different places.

If you merge identity, hidden help, and AI policy into one “integrity score,” you fix the wrong stage and punish candidates who guessed your rules.

If you merge those stages into one “trust score,” you fix the wrong control and punish candidates who guessed your unwritten rules.

Residual risk at every stage

Each stage has different failure modes. Naming them keeps your program design honest.

Policy and industry briefings have moved synthetic media and identity-fraud in hiring from novelty to risk you plan for (WEF, DISA). In practice, Metaview and peers still treat face motion glitches as a nudge to review, not proof (Metaview).

Checklist: what to do at each assessment stage

Tables above are the map; this section is the playbook in prose you can hand to interviewers and recruiting ops.

Presence (before and at session start): Where policy and counsel allow, use short, unpredictable checks (for example, a fresh verbal confirm at session start). Correlate voice and story with written work they already submitted under the same rules. Re-verify at offer or before production access, especially if several weeks passed or the role tier changed.

Live round (in the call): Publish rules before the session: monitors, phone on desk, browser profile, extensions, and whether inline IDE AI is in or out of bounds. During the session, change the problem so a rehearsed, piped, or pasted solution has to adapt, not as a constant ambush, but as part of a disclosed bar for the round.

Concrete example: The candidate is optimizing a single-region API for p95 latency. Midway, you introduce two constraints sequentially: first at the infrastructure layer (one availability zone becomes unavailable at runtime), then at the data layer (a new payload requirement invalidates their current data model). You keep them separate deliberately, because each stressor targets a different reasoning layer and conflating them prevents you from seeing where thinking actually breaks.

You are not scoring edit speed alone. You are watching whether reasoning genuinely restarts with each new constraint, surfacing new tradeoffs, naming new failure modes, and explicitly reopening prior decisions, or whether only the prose changes while the structure stays frozen.

Then pair on a small, unfamiliar slice of your real codebase (secrets stripped) so public-repo pattern matching matters less. If you use vendor telemetry, route flags to a human reviewer and publish an appeal path. Treat disqualification as a last resort, reached only after someone with hiring authority reads the clip in context.

Take-home policy (after, on paper): Publish per-stage rules before candidates spend evening hours on a take-home: what is allowed, what must be cited, and what counts as autonomous work. Surprise rules erode fairness and employer brand.

What to put in your policy before anyone interviews

Most fairness failures here are surprise rules, not “bad candidates.” Publish stance per stage before candidates invest evenings in take-homes.

For full-funnel profile and partner controls, see Fake candidates in remote engineering hiring.

One standard from first touch to start date

For a US team async with engineers in places like São Paulo or Mexico City, the bar is not typing speed alone. It is judgment, clear written updates, and English under pressure when the thread gets tense. Those are also the signals pure automation reads least reliably, even when the technical profile on paper looks fine. And in a nearshore LATAM market where candidate volume is high and sourcing is fast, networks that optimize for contact-light throughput trade depth of review for coverage. For growth-stage teams, the return on a careful hire compounds faster than the time you spent on it.

Everything above is about how you design integrity into interviews and written policy. That design only holds if the person who cleared your live round and your take-home bar is the same one who shows up on day one with production access. Most teams do not find out it broke until after that moment.

At Remotely, human talent specialists run the verification layer so that gap does not quietly become your weakest check: ID, references, and background aligned to your stages, with a human review pass when profile and interview disagree. With a pool of 7,000+ pre-vetted senior candidates already screened to that bar, having a partner embedded at every stage also means anomalies do not die in Slack threads; they get flagged, documented, and escalated before the next step, not after the hire closes. You own the process design. We make sure it does not break at the seam where policy ends and identity begins. Tell us what you are hiring for.

Frequently Asked Questions

How do you detect AI cheating in a technical interview?

Start with written rules for that stage: which tools are allowed (browser LLMs versus IDE assistants versus local models), how many monitors are permitted, whether a phone may be on the desk, and what “closed book” means in your environment. During the session, ask follow-up questions that change the constraints of the same problem (latency, failure domains, data size) so a pasted or coached answer has to adapt.

If you use proctoring or IDE telemetry, treat outputs as signals, not verdicts: send anomalies to a human reviewer, document what you observed, and give candidates a clear appeal path before any disqualification. Third-party guidance consistently recommends clusters of weak indicators rather than any single automated flag (Crosschq).

What should we ask in a vendor demo for proctoring, take-homes, or assessment suites?

Vendor demos optimize for happy paths; use the demo to stress the failure paths. For enterprise suites, ask how false positives are triaged, how ATS integration and retention work, and what happens when neurodivergent typing or accents are flagged as generic “suspicious.”

For proctoring-first tools, ask who reviews flags, what the appeal path is, and how cross-border privacy is handled; candidate experience cost often shows up as offer decline rate, not in the room.

For take-home platforms, ask about AI-detection calibration, time boxes, and the default AI stance; local LLMs on a candidate’s machine may never hit vendor telemetry, so you know what the tool can and cannot see. Third-party guidance still stresses clusters of indicators, not one automatic flag, when you add tooling on top of your own rules (Crosschq).

What is the difference between deepfakes and AI coding help?

Deepfakes and proxy presence are about whether the person on camera is the one performing the work and whether that presence stays consistent across the session. AI coding help is about whether the solution in this session reflects their reasoning under your stated rules.

You typically combine liveness or correlated motion checks, session consistency, and clear substitution policy for the first case; environment rules, shifting follow-ups, and optional telemetry with human review for the second. Those stages sit in the same hiring pipeline but answer different questions (DISA, WEF).

Should we ban all AI in technical interviews?

No single ban fits every stage. Where you need baseline signal of unassisted problem solving, ban hidden tools and define “hidden” in writing (including second devices and undisclosed plugins). Where the job actually ships with Copilot or similar, align the interview stage with that reality and document the stance before the candidate invests time.

The usual failure mode is unwritten expectations: strong performers look suspicious, weak ones slip through with pasted work, and your team argues after the fact. Write the policy down per stage and review it when the stack changes.

What are signs of synthetic video in Zoom or Teams?

Unnatural blur or artifacting around the mouth, hairline, or during fast head motion can be a clue that something is off, but vendors such as Metaview treat these as weak signals, not proof. Use them to trigger a human-reviewed next step, never automatic rejection alone, and pair with other checks in the same pipeline, such as voice consistency, problem adaptation, and your published policy on substitutions (Metaview).

Does Remotely use AI in candidate screening?

Remotely combines human specialists with structured vetting so screening is not a single signal or a fully automated gate. Specialists read the same kinds of consistency, risk, and fit questions you would care about in-house; if tooling supports that work, it is there to route and triage, not to replace human judgment on who moves forward.

Hiring teams retain choice and control over offers and how much of the process you run yourself versus with our team. If you need detail on any automated tooling in the current stack or how flags are reviewed, we provide that in writing on request, consistent with what this article argues for: clarity, human review, and no surprise rules.